About

***We are currently experiencing technical issues with esthetic elements of our website. Thank you for being patient with us while we work to correct them. The website should otherwise function normally, if you experience specific issues accessing content, don't hesitate to email us at info.mjmmed@gmail.com***

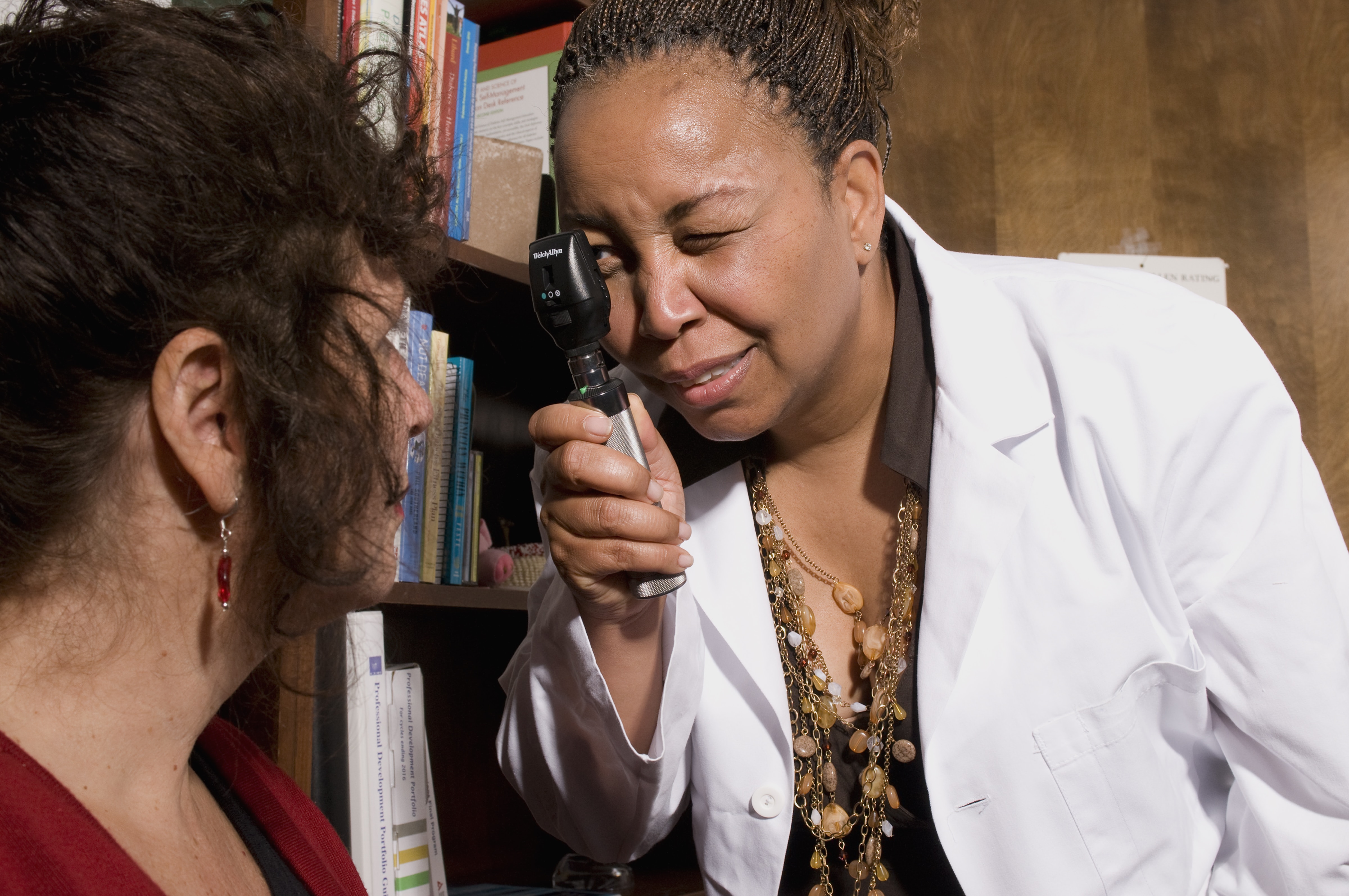

The McGill Journal of Medicine (MJM), is an open access, international, peer-reviewed publication run entirely by the medical and science students of McGill University. Re-launched in 1994 and again in 2015, the MJM's mandate is to provide students with the opportunity to publish on all aspects of medicine and to open up dialogue on a variety of medical issues including education, practice and research.

Announcements

-

2021-07-14

Now Available: Approach To Issue

Our new “Approach To” Issue (Volume 19, Issue 2) features a special introduction from our very own Dr. Stuart Lubarsky, neurologist and associate professor at McGill University. This issue is a guidebook made by students for students, containing a series of clinical vignettes to help tackle common medical conditions from presentation to management. Cover artwork by Athena Ko.

-

2021-07-14

Now Available: New Annual Issue

Our new Annual Issue (Volume 19, Issue 1) features a foreword from Dr. Neil Goldenberg, MJM's 2nd Editor in Chief, and highlights articles born from research and reflections during the worldwide COVID-19 pandemic. Cover artwork by Hosanna Galea.

-

2020-10-29

Call for submissions: Special Nursing Issue

We are now accepting submissions for an upcoming nursing issue highlighting and celebrating the contribution of nurses to patient care, advocacy, and research! We accept original research, brief reports, letters to the editor, reviews, commentaries, creative essays, and more!

Artwork welcome as well!

Deadline: February 15, 2021

https://mjm.mcgill.ca/articletypes